AI spend is moving from an experimentation budget to an operating cost. That changes the executive question, especially the question that the CFO has to handle every day: can my organization explain what each AI-supported decision costs, when a premium model is justified, and how quality is monitored once the system is live?

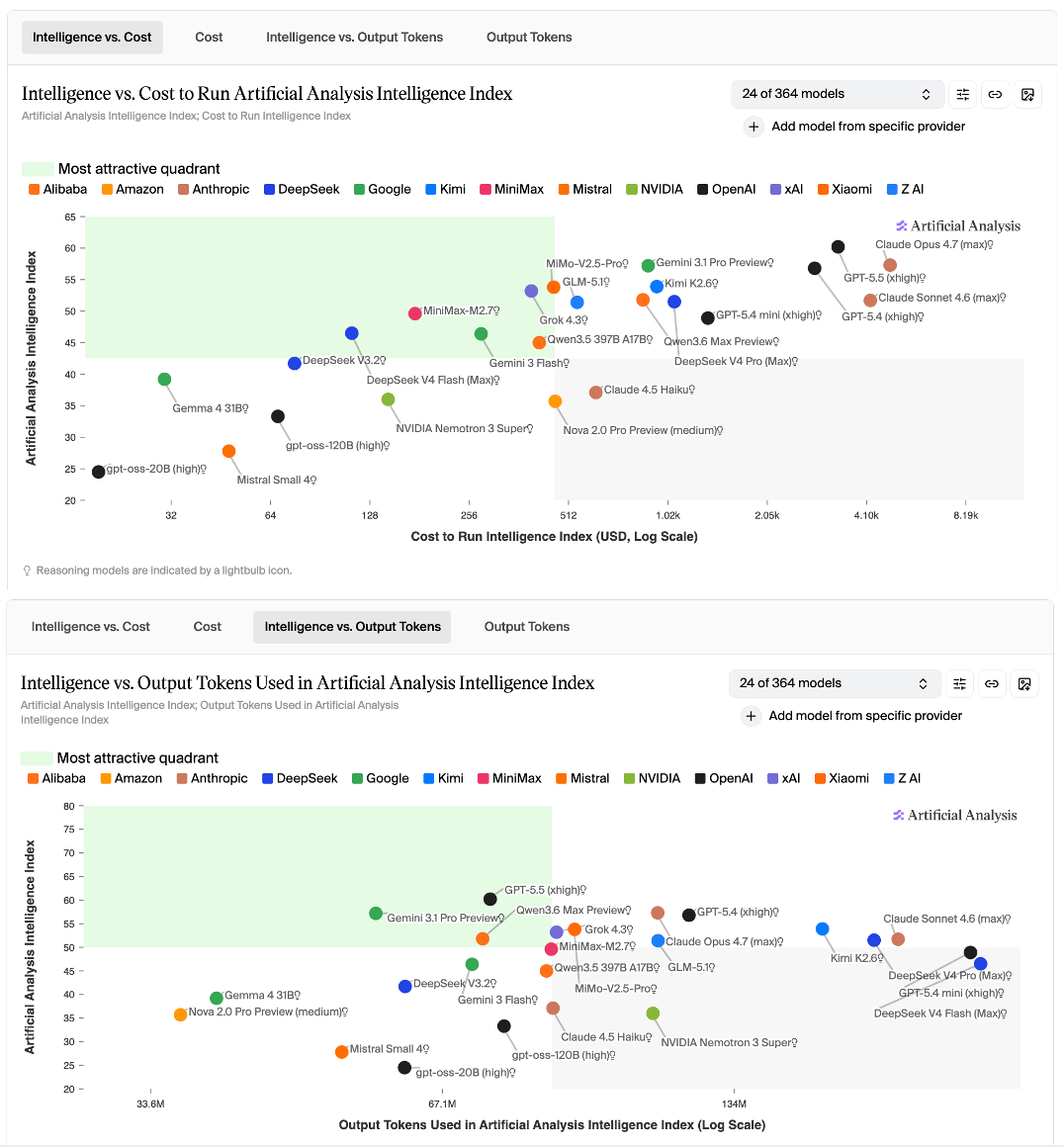

Artificial Analysis makes the cost curve visible. Its model leaderboard compares intelligence, price, speed, latency, and other operating metrics, while its Intelligence Index calculates benchmark cost using model input/output pricing and token usage. Models with similar intelligence scores can sit at very different cost points, and some premium models use far more output tokens to complete the same evaluation suite.

Translating the cost into the enterprise context: enterprise AI cost is beyond just the subscription or API rate. Repeated inference, output volume, latency, escalation, review, and integration constitute the total costs. Epoch AI’s work on inference economics reinforces this point: deployment economics are shaped by the relationship between model size, cost, speed, and hardware constraints.

The business risk is misallocation. Frontier models may still be worth the premium for ambiguous, high-stakes, or tail-risk decisions. They are harder to justify for routine summarization, classification, drafting, internal search, or structured content transformation. However, many companies have not separated those workflows.

This is where AI adoption starts to collide with financial management. McKinsey’s 2025 State of AI survey finds that AI use is now widespread, but only 39% of respondents report enterprise-level EBIT impact, and only about 5.5% of organizations are seeing significant (more than 5%) EBIT impact. The gap is increasingly about redesigning workflows and managing deployment, not simply buying more capability.

The priority order

If AI spend is rising faster than measurable ROI, the first move is an operating review of where AI is being used, what each decision costs, and where quality risk sits.

First, fix the measurement gap. Most companies know the AI vendor bill. Far fewer know the cost per resolved case, completed review, generated recommendation, or escalated decision. Without that baseline, AI spend is not governable.

Second, separate premium work from routine work. Frontier models belong where the cost of error is high: regulatory interpretation, legal review, strategic synthesis, high-value client work, and decisions with asymmetric downside. Mid-tier models should be tested aggressively for repeatable, low-ambiguity work.

Third, manage output volume. Token usage is a cost driver, a latency driver, and often an adoption driver. Structured outputs, token caps, retrieval constraints, and prompt discipline can reduce spend without changing models.

Fourth, build routing before scaling. A portfolio of models only works if the organization can decide which task goes where. a16z points to model abstraction and routing as part of how AI applications manage cost-to-serve. By routing queries to the most cost-effective model (smaller/open-source) rather than always using frontier models, apps maintain sustainable margins.

Three things to do this month

Audit cost per decision by use case. Take the top ten AI workflows by volume and map vendor spend, token consumption, latency, human review rate, and escalation rate. The goal here is to identify where premium capability is being spent on routine work.

Cap output volume on high-frequency workflows. Start with summarization, drafting, classification, and internal Q&A. Require structured outputs, shorter responses, retrieval limits, and explicit token budgets. Measure whether quality changes. Where quality holds, the savings are real.

Pilot dual-model routing on one workflow. Use a lower-cost model as the default. Escalate to a premium model only when confidence is low, the task is ambiguous, or the risk threshold is breached. Add human review above that. Measure cost, quality, latency, and escalation rate over one sprint.

For every CFO: can you describe, by decision type, which model handles it, what it costs, how long it takes, when it escalates, and how you would know if quality degraded?

If not, you do not yet have an AI cost strategy. You only have AI usage.